Just recently I received a great questions in the Q&A section of my website.

"We have a 500 cfm air compressor with loading setpoint of 5.5 bar and unloading setpoint of 7.0 bar. A consultant visited our site and said we are wasting 90k. What is wrong with these setpoints?"

So what did the consultant mean by that? Why is this guy wasting money?

Compressed air is expensive. It's expensive to produce, and easy to waste On average, using compressed air is 8 to 10 times more expensive than electricity - but using compressed air has a lot of advantages and that's why we use it. But in many systems, a lot of compressed air is wasted. In an average compressed air system, 30% of energy is wasted / could be saved. For a medium sized system, that means thousands of dollars per year of wasted electricity.

Saving Money On Compressed Air

There are two main strategies of saving (not wasting) money on compressed air:

- lower amount of compre bit more of explaining: as the pressure rises, the amount of work a compressor needs to do to compress the air increases as well. The higher the pressure, the more power is needed by the electric motor to rotate the screws of the air compressor. higher pressure = more expensive, lower pressure= less expensive There are many ways to apply both of these two main strategies. This question and the remark of the consultant is about strategy number 2: the pressure.

Pressure setpoints

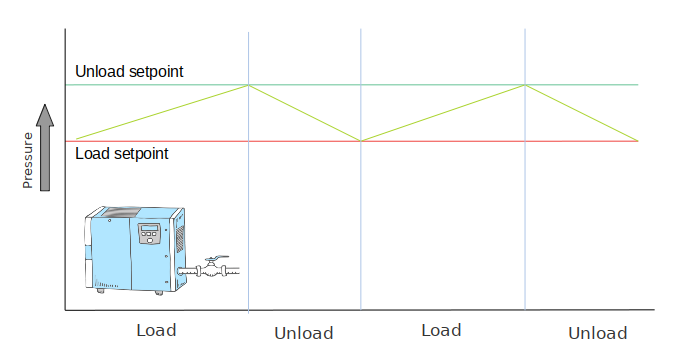

Rotary screw compressors have two setpoints. A lower setpoint, where the compressor starts up/loads and an upper setpoint where the compressor unloads/stops. (this is not true for VSD / variable speed compressors, which have only 1 setpoint)

*Example load and unload setpoints

*Example load and unload setpoints

The load setpoint

The load setpoint is the minimum required pressure we need for our application. If the air drops lower, the equipment starts to malfunction. That's why the compressor starts up, or 'loads' at the lower setpoint, the 'loading pressure'.

In the compressed air system of the question, the load setpoint is 5.5 bar. So what about the unload setpoint?

The unload setpoint

We need 5.5 bar as a minimum, but unfortunately air compressors can't output a single pressure, like 5.5 bar. They output a certain volume of air per minute that they push into the compressed air system. And because air is compressible, this makes the air pressure rise. (compare it to a pump that pumps water into a water tower. Pumping water in makes the water level rise, using water makes the water level drop)

As long as the air production is larger than the air consumption, the pressure will rise. So there's an upper limit, the unload setpoint, where the compressor will stop. In this case it's 7.0 bar.

When the pressure reaches 7.0 bar, the compressor stops and the pressure slowly drops until it reaches the unload pressure and the compressor starts/loads again.

Pressure differential

The difference between the load and the unload setpoint is called the pressure differential. In an ideal world, with a perfect air compressor, no pressure drops, etc, the pressure differential would be 0 and the pressure would always be steady at exactly the pressure we need.

But, in the real world the pressure differential is needed for the correct operation of our air compressor. Since it can't output a single steady pressure, it needs these two pressures to bounce in between.

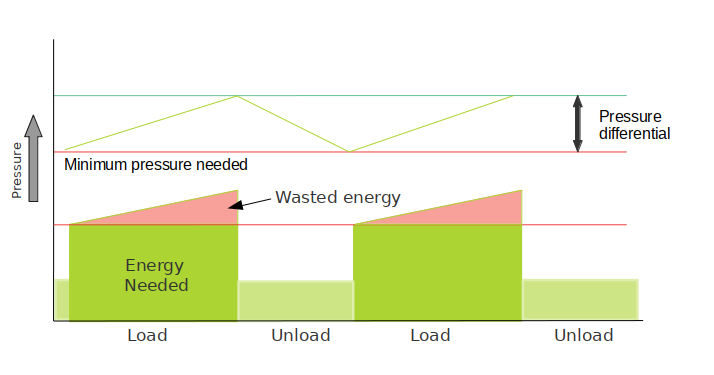

Wasted energy

But remember two things I previously mentioned:

- Every increase in pressure costs extra energy (=money)

- We only need the lower pressure (unload pressure) for our application.

In other words, that pressure differential, on top of the minimum pressure we need is all wasted energy. So we want to keep it as small as possible.

Load-unload energy use

Load-unload energy use

Calculations

The question mentions a 500 cfm air compressor. 500 cfm = 14.1 m3/min

A quick search for different models of air compressors shows us that for 500cfm / 14 m3/min, we need a compressed that is around 75 kW.

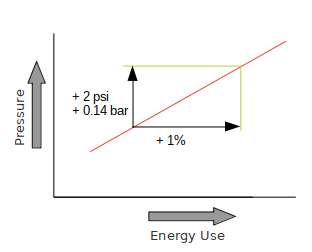

A general rule of thumb is this: For every 2 psi increase in pressure, we need 1% extra energy. Or: For every 0.14 bar increase in pressure, we use 1% extra energy. Or: For every 1 bar increase in pressure, we use 7.1% extra energy

Pressure vs energy use

Pressure vs energy use

So what numbers do we use for our example? Lets simply use the average pressure. (in reality it will probably be a bit higher than the average pressure since the compressor output is higher at lower pressures and air consumption increases at higher pressure, so the 'line up' from 5.5 to 7.5 bar is more of a curve that flattens out at the top than a straight line).

Load = 5.5 bar Unload = 7.5 bar Average: 6.5 bar

Now let's say we can move the unload pressure down to 6.5 bar. The new average pressure is 6 bar.

Load = 5.5 bar Unload = 6.5 bar Average: 6.0 bar

So our average win is 0.5 bar.

According to the rule of thumb, 0.5 bar would equal around 3.5% increase/decrease in energy use. We don't know the exact motor power at each pressure and it will be a bit different between different machines. But lets do some guessing: Say the electric motor is 95% efficient. For an output power of around 75 kW, we need 75 / 0.95 = 79 kW electrical input power.

Let's say this is the power needed to run at an average pressure of 6.5 bar.

6.5 bar pressure If we run this compressor for 8.000 hours a year (almost full-time 24/7), and electricity costs us $0.10 / kWh, our annual electricity bill is: 8000 * 79 * 0.10 = $63,200 per year.

6.0 bar pressure According to our rule of thumb, every 1 bar equals 7.1% energy use. So our 0.5 average decrease in pressure results in half of that: 3.5% energy savings: $63,200 * 3.5% = $2,212

Over the lifetime of the air compressor (10 years?) that's more than twenty two thousand dollars!

How low can we set the unload pressure / pressure differential?

Knowing this, we of course would try to lower the unload pressure even more! 6 bar.. 5.7 bar .. 5.6 bar?

Air receiver with pressure gauge

Air receiver with pressure gauge

But we need to be careful not to lower it to much, making the pressure differential too small. If the pressure differential is set too small, the compressor will load and unload quickly. This results in very short cycles - for example a few seconds loading, followed by a few seconds of unloading. So we need to find a balance between compressor cycling time and an acceptable pressure differential.

A standard (average) differential is around 1 bar. If we have a very stable system (big air storage, not much fluctuations in air demand) and also a supply that is more or less matched with the air demand, we can use a much lower pressure differential - for example 0.5 bar or 0.3 bar. This is especially true in large (multiple compressors) systems, which means every 0.1 bar saved means thousands of dollars of reduced energy cost per year.

Another reason why we need the pressure differential is because it acts as a storage of compressed air. Large peaks in air demand can be supplied from the pressure differential (the pressure will drop during this time). It gives the compressor (or a second compressor) the time to start up if necessary, or when the peaks of air usage are short, this won't even be necessary.

Conclusions

The consultant in the question was a bit exaggerating when he said 90k (it's also not mentioned in the question over what time span or in what currency). But, by simply pressing some buttons, we can save at least $2k a year.

If you want want to lower your pressure differential, but your cycle times are already short, consider installing a bigger (or second) air receiver. It smooths out pressure fluctuations, meaning it takes longer to 'fill up' and to 'consume' the air in the system.

Like I've been saying in my courses and other articles, every positive action we take to reduce compressed air waste and reduce energy consumption always pays back quickly - simply because compressed air is so expensive to produce. T

here are many different ways to save money on compressed air (and there is A LOT of money to be saved!) - we barely scratched the surface here. If you're interested to learn more - get my course Industrial Compressed Air Systems - the small (tiny) investment will quickly pay back!

Comments

No comments yet…

Log in or create an account to make a comment...